|

Free. NAS 9. 1. 0 on VMware ESXi 6. Guide. This is a guide which will install Free. NAS 9. 1. 0 under VMware ESXi and then using ZFS share the storage back to VMware. This is roughly based on Napp- It’s All- In- One design, except that it uses Free. NAS instead of Omin. OS. Disclaimer: I should note that Free. NAS does not officially support running virtualized in production environments. If you run into any problems and ask for help on the Free. NAS forums, I have no doubt that Cyberjock will respond with “So, you want to lose all your data?” So, with that disclaimer aside let’s get going: Update: Josh Paetzel wrote a post on. Virtualizing Free. NASso this is somewhat “official” now. I would still exercise caution. Update 2: This guide was originally written for Free. NAS 9. 3, I’ve updated it for Free. NAS 9. 1. 0. Also, I believe Avago LSI P2. I’ve removed my warning on using P2. Added sections 7.

Resource reservations) and 1. Get proper hardware. Example 1: Supermicro 2. ESXi and vCenter Server 6.0 Documentation VMware vSphere ESXi and vCenter Server 6.0 Documentation vSphere Installation and Setup Updated Information. U Build. Super. Micro X1. SL7- F (which has a built in LSI2. HBA). Xeon E3- 1. ECC Memory. 6 hotswap bays with 2. TB HGST HDDs (I use RAID- Z2)4 2. Intel DC S3. 70. 0’s for SLOG / ZIL, and 2 drives for installing Free. NAS (mirrored)Example 2: Mini- ITX Datacenter in a Box Build. Install A Vm Prior To Running This Program Requires Administrative Privileges

X1. 0SDV- F (build in Xeon D- 1. ECC Memory. IBM 1. LSI 9. 22. 0- 8i HBA4 hotswap bays with 2. TB HGST HDDs (I use RAID- Z)2 Intel DC S3. SLOG / ZIL, and one to boot ESXi and install Free. NAS to. Hard drives. Install A Vm Prior To Running This Program Requires A Supported

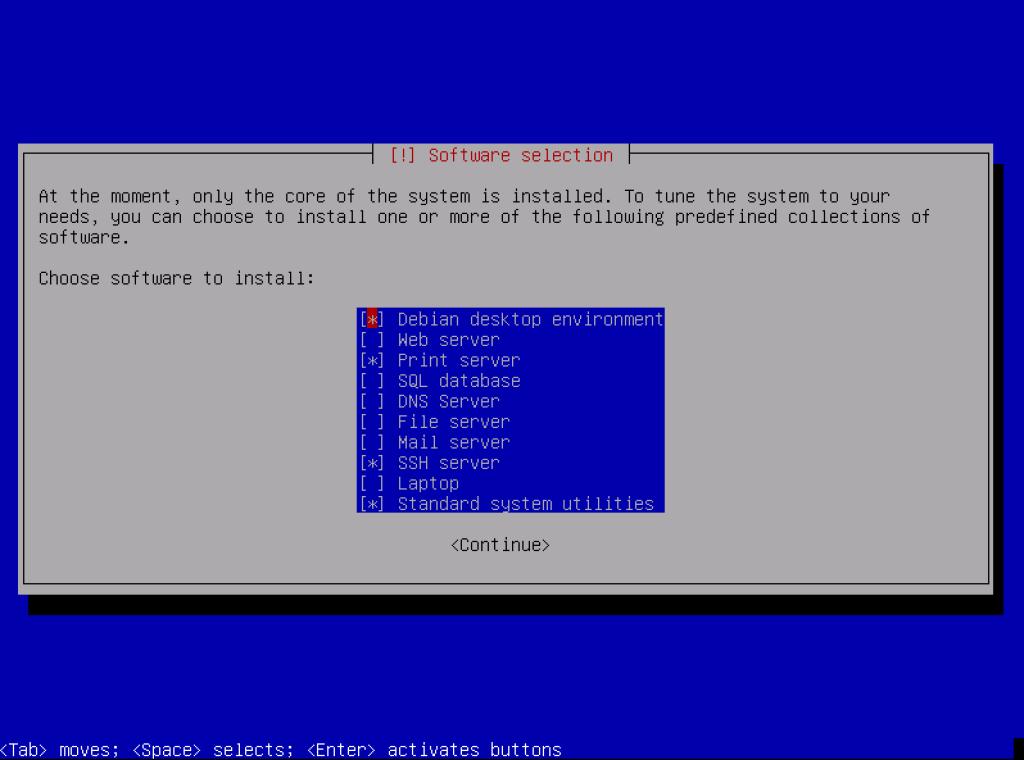

See info on my Hard Drives for ZFS post. The LSI2. 30. 8/M1. I like do to two DC S3. SLOG device and then do a RAID- Z2 of spinners on the other 6 slots. Also get one (preferably two for a mirror) drives that you will plug into the SATA ports (not on the LSI controller) for the local ESXi data store. I’m using DC S3. 70. I have, but this doesn’t need to be fast storage, it’s just to put Free. NAS on. 2. Flash HBA to IT Firmware. As of Free. NAS 9. IT mode P2. 0 (looks like it’s P2. I strongly suggest pulling all drives before flashing. LSI 2. IT firmware for Supermicro. Here’s instructions to flash the firmware: http: //hardforum. Supermicro firmware: ftp: //ftp. Driver/SAS/LSI/2. Firmware/IT/For IBM M1. LSI Avago 9. 22. 0- 8i. Instructions for flashing firmware: https: //forums. LSI / Avago Firmware: http: //www. If you already have the card passed through to Free. NAS via VT- d (steps 6- 8) you can actually flash the card from Free. NAS using the sas. IT mode so I’m just upgrading it). Package_P2. 0_IR_IT_FW_BIOS_for_MSDOS_Windows. Firmware/HBA_9. 21. IT/2. 11. 8it. bin - b sasbios_rel/mptsas. LSI Corporation SAS2 Flash Utility. Version 1. 6. 0. 0. Copyright (c) 2. 00. LSI Corporation. All rights reserved. Advanced Mode Set. Adapter Selected is a LSI SAS: SAS2. B2). Executing Operation: Flash Firmware Image. Firmware Image has a Valid Checksum. Firmware Version 2. Firmware Image compatible with Controller. Valid NVDATA Image found. NVDATA Version 1. Checking for a compatible NVData image.. NVDATA Device ID and Chip Revision match verified. NVDATA Versions Compatible. Valid Initialization Image verified. Valid Boot. Loader Image verified. Beginning Firmware Download.. Firmware Download Successful. Verifying Download.. Firmware Flash Successful. Resetting Adapter.. Package_P2. 0_IR_IT_FW_BIOS_for_MSDOS_Windows. Firmware/HBA_9. 21. IT/2. 11. 8it. bin - b sasbios_rel/mptsas. LSI Corporation SAS2 Flash Utility. Version. 16. 0. 0. Copyright(c)2. 00. LSI Corporation. All rights reserved. Advanced Mode Set. Adapter Selected isa. LSI SAS: SAS2. 00. B2)Executing Operation: Flash Firmware Image. Firmware Image hasa. Valid Checksum. Firmware Version. Firmware Image compatible with Controller. Valid NVDATA Image found. NVDATA Version. 14. Checking foracompatible NVData image.. NVDATA Device IDand. Chip Revision match verified. NVDATA Versions Compatible. Valid Initialization Image verified. Valid Boot. Loader Image verified. Beginning Firmware Download.. Firmware Download Successful. Verifying Download.. Firmware Flash Successful. Resetting Adapter..(Wait a few minutes, at this point Free. NAS finally crashed. Poweroff. Free. NAS, and then reboot VMware)Warning on P2. Some earlier versions of the P2. P2. 0. 0. 0. 0. 4. If you can’t P2. 0 in aversion later than P2. P1. 9 or P1. 6. 3. Optional: Over- provision ZIL / SLOG SSDs. If you’re going to use an SSD for SLOG you can over- provision them. You can boot into an Ubuntu Live. CD and use hdparm, instructions are here: https: //www. SSD_Over- provisioning_using_hdparm You can also do this after after VMware is installed by passing the LSI controller to an Ubuntu VM (Free. NAS doesn’t have hdparm). I usually over- provision down to 8. GB. Update 2. 01. But you may want to only go to 2. GB depending on your setup! One of my colleagues discovered 8. GB over- provisioning wasn’t even maxing out 1. Gb network (remember, every write to VMware is a sync so it hits the ZIL no matter what) with 2 x 1. Gb fiber lagged connections between VMware and Free. NAS. This was on an HGST 8. Intel DC S3. 70. 0… and it wasn’t virtualized setup. But thought I’d mention it here. Install VMware ESXi 6. The free version of the hypervisor is here. I usually install it to a USB drive plugged into the motherboard’s internal header. Under configuration, storage, click add storage. Choose one (or two) of the local storage disks plugged into your SATA ports (do not add a disk on your LSI controller). Create a Virtual Storage Network. For this example my VMware management IP is 1. VMware Storage Network ip is 1. Free. NAS Storage Network IP is 1. Create a virtual storage network with jumbo frames enabled. VMware, Configuration, Add Networking. Virtual Machine…Create a standard switch (uncheck any physical adapters). Add Networking again, VMKernel, VMKernel… Select v. Switch. 1 (which you just created in the previous step), give it a network different than your main network. I use 1. 0. 5. 5. IP and 2. 55. 2. 55. Some people are having trouble with an MTU of 9. I suggest leaving the MTU at 1. MTU of 9. 00. 0. Also, if you run into networking issues look at disabling TSO offloading (see comments). Under v. Switch. 1 go to Properties, select v. Switch, Edit, change the MTU to 9. Answer yes to the no active NICs warning. Then select the Storage Kernel port, edit, and set the MTU to 9. Configure the LSI 2. Passthrough (VT- d). Configuration, Advanced Settings, Configure Passthrough. Mark the LSI2. 30. You must have VT- d enabled in the BIOS for this to work so if it won’t let you for some reason check your BIOS settings. Reboot VMware. 7. Create the Free. NAS VM. Download the Free. NAS ISO from http: //www. Create a new VM, choose custom, put it on one of the drives on the SATA ports, Virtual Machine version 1. Guest OS type is Free. BSD 6. 4- bit, 1 socket and 2 cores. Try to give it at least 8. GB of memory. On Networking give it two adapters, the 1st NIC should be assigned to the VM Network, 2nd NIC to the Storage network. Set both to VMXNET3. SCSI controller should be the default, LSI Logic Parallel. Choose Edit the Virtual Machine before completion. If you have a second local drive (not one that you’ll use for your zpool) here you can add a second boot drive for a mirror. Before finishing the creation of the VM click Add, select PCI Devices, and choose the LSI 2. And be sure to go into the CD/DVD drive settings and set it to boot off the Free. NAS iso. Then finish creation of the VM. Free. NAS VM Resource allocation. Also, since Free. NAS will be driving the storage for the rest of VMware, it’s a good idea to make sure it has a higher priority for CPU and Memory than other guests. Edit the virtual machine, under Resources set the CPU Shares to “High” to give Free. NAS a higher priority, then under Memory allocation lock the guest memory so that VMware doesn’t ever borrow from it for memory ballooning. You don’t want VMware to swap out ZFS’s ARC (memory read cache). Install Free. NAS. Boot of the VM, install it to your SATA drive (or two of them to mirror boot). After it’s finished installing reboot. Install VMware Tools. SKIP THIS STEP. As of Free. NAS 9. 1. 0. 1 installing VMware should may no longer be necessary–you can skip step 9 and go to 1. Just leaving this for historical purposes. In VMware right- click the Free. NAS VM, Choose Guest, then Install/Upgrade VMware Tools. You’ll then choose interactive mode. Mount the CD- ROM and copy the VMware install files to Free. NAS. # mkdir /mnt/cdrom. VMware\ Tools /mnt/cdrom/. Free. BSD9. 0- amd. VMware\ Tools /mnt/cdrom/# cp /mnt/cdrom/vmware- freebsd- tools. Free. BSD9. 0- amd. Once installed Navigate to the Web. Flex. Pod Data Center with Oracle RAC on Oracle VM with 7- Mode. Table Of Contents. About the Authors. Acknowledgment. About Cisco Validated Design (CVD) Program. Flex. Pod Data Center with Oracle RAC on Oracle VMExecutive Summary. Target Audience. Purpose of this Guide. Business Needs. Solution Overview. Oracle Database 1. Release 2 RAC on Flex. Pod with Oracle Direct NFS Client. Technology Overview. Cisco Unified Computing System. Cisco UCS Blade Chassis. Cisco UCS B2. 00 M3 Blade Server. Cisco UCS Virtual Interface Card 1. Cisco UCS 6. 24. 8UP Fabric Interconnect. Cisco UCS Manager. UCS Service Profiles. Cisco Nexus 5. 54. UP Switch. Net. App Storage Technologies and Benefits. Storage Architecture. RAID- DPSnapshot. Flex. Vol. Net. App Flash Cache. Net. App On. Command System Manager 2. Oracle VM 3. 1. 1. Oracle VM Architecture. Advantage of Using Oracle VM for Oracle RAC Database. Para- virtualized VM (PVM)Oracle Database 1. Release 2 RACOracle Database 1. Direct NFS Client. Design Topology. Hardware and Software used for this Solution. Cisco UCS Networking and Net. App NFS Storage Topology. Cisco UCS Manager Configuration Overview. High Level Steps for Cisco UCS Configuration. Configuring Fabric Interconnects for Blade Discovery. Configuring LAN and SAN on UCS Manager. Configure Pools. Set Jumbo Frames in Both the Cisco UCS Fabrics. Configure v. NIC and v. HBA Templates. Configure Ethernet Uplink Port Channels. Create Local Disk Configuration Policy (Optional)Create FCo. E Boot Policies. Service Profile creation and Association to UCS Blades. Create Service Profile Template. Server Boot Policy. Create Service Profiles from Service Profile Templates. Associating Service Profile to Servers. Nexus 5. 54. 8UP Configuration for FCo. E Boot and NFS Data Access. Enable Licenses. Cisco Nexus ACisco Nexus BSet Global Configurations. Cisco Nexus 5. 54. A and Cisco Nexus 5. BCreate VLANs. Cisco Nexus 5. A and Cisco Nexus 5. BAdd Individual Port Descriptions for Troubleshooting. Cisco Nexus 5. 54. ACisco Nexus 5. 54. BCreate Port Channels. Cisco Nexus 5. 54. A and Cisco Nexus 5. BConfigure Port Channels. Cisco Nexus 5. 54. A and Cisco Nexus 5. BConfigure Virtual Port Channels. Cisco Nexus 5. 54. ACisco Nexus 5. 54. BCreate VSANs, Assign and Enable Virtual Fibre Channel Ports. Cisco Nexus 5. 54. ACisco Nexus 5. 54. BCreate Device Aliases for FCo. E Zoning. Cisco Nexus 5. ACisco Nexus 5. 54. BCreate Zones. Cisco Nexus 5. ACisco Nexus 5. 54. BNet. App Storage Configuration Overview Storage Configuration for FCo. E Boot. Create and Configure Aggregate, Volumes and Boot LUNs. Net. App FAS3. 27. HA Controller ANet. App FAS3. 27. 0HA Controller BCreate and Configure Initiator Group (igroup) and LUN mapping. Net. App FAS3. 27. HA Controller ANet. App FAS3. 27. 0HA Controller BCreate and Configure Volumes and LUNs for Guest VMs. Net. App FAS3. 27. HA Controller ANet. App FAS3. 27. 0HA Controller BCreate and Configure Initiator Group (igroup) and Mapping of LUN for Guest VMNet. App FAS3. 27. 0HA Controller ANet. App FAS3. 27. 0HA Controller BStorage Configuration for NFS Storage Network. Create and Configure Aggregate, Volumes. Net. App FAS3. 27. HA Controller ANet. App FAS3. 27. 0HA Controller BCreate and Configure VIF Interface (Multimode)VIF Configuration on Controller AVIF Configuration on Controller BCheck the Net. App Configuration. UCS Servers and Stateless Computing via FCo. E Boot. Boot from FCo. E Benefits. Quick Summary for Boot from SAN Configuration. Oracle VM Server Install Steps and Recommendations. Oracle VM Server Network Architecture. Oracle VM Manager Installation. Oracle VM Server Configuration Using Oracle VM Manager. Oracle Linux Installation. Oracle Database 1. Release 2 Grid Infrastructure with RAC Option Deployment. Advantages of Huge. Pages. Installing Oracle RAC 1. Release 2. Workloads and Database Configuration. OLTP Database. DSS (Sales History) Database. Performance Data from the Tests. OLTP Workload. DSS Workload Mixed Workload Destructive and Hardware failover Tests Conclusion. Appendix. Appendix A: Nexus 5. UP Configuration. Nexus 5. 54. 8 Fabric A Configuration. Nexus 5. 54. 8 Fabric B Configuration. Appendix B: Verify Oracle RAC Cluster Status Command Output. References. Flex. Pod Data Center with Oracle RAC on Oracle VM with 7- Mode. Deployment Guide for Flex. Pod with Oracle Database 1. Release 2 RAC on Oracle VM 3. November 2. 2, 2. Building Architectures to Solve Business Problems About the Authors. Niranjan, Technical Marketing Engineer, SAVBU, Cisco Systems. Niranjan Mohapatra is a Technical Marketing Engineer in Cisco Systems Data Center Group (DCG) and specialist on Oracle RAC RDBMS. He has over 1. 4 years of extensive experience on Oracle RAC Database and associated tools. Niranjan has worked as a TME and a DBA handling production systems in various organizations. He holds a Master of Science (MSc) degree in Computer Science and is also an Oracle Certified Professional (OCP - DBA) and Net. App accredited storage architect. Niranjan also has strong background in Cisco UCS, Net. App Storage and Virtualization. Acknowledgment For their support and contribution to the design, validation, and creation of the Cisco Validated Design, I would like to thank: •Siva Sivakumar- Cisco •Vadiraja Bhatt- Cisco •Tushar Patel- Cisco •Ramakrishna Nishtala- Cisco •John Mc. Abel- Cisco •Steven Schuettinger- Net. App The CVD program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information visit: http: //www. ALL DESIGNS, SPECIFICATIONS, STATEMENTS, INFORMATION, AND RECOMMENDATIONS (COLLECTIVELY, "DESIGNS") IN THIS MANUAL ARE PRESENTED "AS IS," WITH ALL FAULTS. CISCO AND ITS SUPPLIERS DISCLAIM ALL WARRANTIES, INCLUDING, WITHOUT LIMITATION, THE WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THE DESIGNS, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES. THE DESIGNS ARE SUBJECT TO CHANGE WITHOUT NOTICE. USERS ARE SOLELY RESPONSIBLE FOR THEIR APPLICATION OF THE DESIGNS. THE DESIGNS DO NOT CONSTITUTE THE TECHNICAL OR OTHER PROFESSIONAL ADVICE OF CISCO, ITS SUPPLIERS OR PARTNERS. USERS SHOULD CONSULT THEIR OWN TECHNICAL ADVISORS BEFORE IMPLEMENTING THE DESIGNS. RESULTS MAY VARY DEPENDING ON FACTORS NOT TESTED BY CISCO. The Cisco implementation of TCP header compression is an adaptation of a program developed by the University of California, Berkeley (UCB) as part of UCB's public domain version of the UNIX operating system. All rights reserved. Copyright © 1. 98. Regents of the University of California. Cisco and the Cisco Logo are trademarks of Cisco Systems, Inc. U. S. and other countries. A listing of Cisco's trademarks can be found at http: //www. Third party trademarks mentioned are the property of their respective owners. The use of the word partner does not imply a partnership relationship between Cisco and any other company. R) Any Internet Protocol (IP) addresses and phone numbers used in this document are not intended to be actual addresses and phone numbers. Any examples, command display output, network topology diagrams, and other figures included in the document are shown for illustrative purposes only. Any use of actual IP addresses or phone numbers in illustrative content is unintentional and coincidental. Cisco Systems, Inc. All rights reserved. Flex. Pod Data Center with Oracle RAC on Oracle VM Executive Summary Industry trends indicate a vast data center transformation toward shared infrastructures. Enterprise customers are moving away from silos of information and moving toward shared infrastructures to virtualized environments and eventually to the cloud to increase agility and operational efficiency, optimize resource utilization, and reduce costs. Flex. Pod is a pretested data center solution built on a flexible, scalable, shared infrastructure consisting of Cisco UCS servers with Cisco Nexus® switches and Net. App unified storage systems running Data ONTAP. The Flex. Pod components are integrated and standardized to help you eliminate the guesswork and achieve timely, repeatable, consistent deployments.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

November 2017

Categories |

RSS Feed

RSS Feed